Documentation Index

Fetch the complete documentation index at: https://launchdarkly-preview.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

I added LaunchDarkly observability to my Christmas-play pet casting app thinking I’d catch bugs. Instead, I unwrapped the perfect gift 🎁. Session replay shows me WHAT users do, and online evaluations show me IF my model made the right casting decision with real-time accuracy scores. Together, they’re like milk 🥛 and cookies 🍪 - each good alone, but magical together for production AI monitoring.

I added LaunchDarkly observability to my Christmas-play pet casting app thinking I’d catch bugs. Instead, I unwrapped the perfect gift 🎁. Session replay shows me WHAT users do, and online evaluations show me IF my model made the right casting decision with real-time accuracy scores. Together, they’re like milk 🥛 and cookies 🍪 - each good alone, but magical together for production AI monitoring.

See the App in Action

Tip: Swipe or scroll horizontally to navigate through the screenshots

Discovery #1: Users’ 40-second patience threshold

I decided to use session replay to evaluate the average time it took users to go through each step in the AI casting process. Session replay is LaunchDarkly’s tool that records user interactions in your app - every click, hover, and page navigation - so you can watch exactly what users experience in real-time. The complete AI casting process takes 30-45 seconds: personality analysis (2-3s), role matching (1-2s), DALL-E 3 costume generation (25-35s), and evaluation scoring (2-3s). That’s a long time to stare at a loading spinner wondering if something broke.What are progress steps?

Progress steps are UI elements I added to the app - not terminal commands or backend processes, but actual visual indicators in the web interface that show users which phase of the AI generation is currently running. These appear as a simple list in the loading screen, updating in real-time as each AI task completes. No commands needed - they automatically display when the user clicks “Get My Role!” and the AI processing begins.Session replay revealed:

This made the difference:

Clear progress steps:Discovery #2: Observability + online evaluations give the complete picture

Session replay shows user behavior and experience. Online evaluations expose AI output quality through accuracy scoring. Together, they form a solid strategy for AI observability. To see this in action, let’s take a closer look at an example.Example: The speed-running corgi owner

In this scenario, a user blazes through the entire pet app setup from the initial quiz to the final results, completing the process in record time. So fast, in fact, that instead of this leading to a favorable outcome, it led to an instance of speed killing quality. Session Replay Showed:- Quiz completed in 8 seconds (world record) - they clicked the first option for every question

- Skipped photo upload entirely

- Waited the full 31 seconds for processing

- Got their result: “Sheep”

- Started rage clicking on the sheep image immediately

- Left the site without saving or sharing

- Evaluation Score: 38/100 ❌

- Reasoning: “Costume contains unsafe elements: eyeliner, ribbons”

- Wait, what? The AI suggested face paint and ribbons, evaluation said NO

- “Costume includes eyeliner which could be harmful to pets” (It’s a DALL-E image!)

- “Ribbons pose entanglement risk”

- “Bells are a choking hazard” (It’s AI-generated art!)

Example: The perfect match

Session Replay Showed:- 45 seconds on quiz (reading each option)

- Uploaded photo, waited for processing

- Spent 2 minutes on results page

- Downloaded image multiple times

- Evaluation Score: 96/100 ⭐⭐⭐⭐⭐

- Reasoning: “Personality perfectly matches role archetype”

- Photo bonus: “Visual traits enhanced casting accuracy”

Discovery #3: The photo upload comedy gold mine

Session replay revealed what photos people ACTUALLY upload. Without it, you’d never know that one in three photo uploads are problematic, and you’d be flying blind on whether to add validation or trust your model.Example: The surprising photo upload analysis

Session Replay Showed:Example: When “bad” photos produce great results

My Favorite Session: Someone uploaded a photo of their cat mid-yawn. The AI vision model described it as “displaying fierce predatory behavior.” The cat was cast as a “Protective Father.” Evaluation score: 91/- The owner downloaded it immediately.

The magic formula: Why this combo works (and what surprised me)

Without Observability:

- “The app seems slow” → ¯\(ツ)/¯

- “We have 20 visitors but 7 completions” → Where do they drop?

With Session Replay ONLY:

- “User got sheep and rage clicked; maybe left angry” → Was this a bad match?

With Model-Agnostic Evaluation ONLY:

- “Evaluation: 22/100 - Eyeliner unsafe for pets” → How did the user react?

- “Evaluation: 96/100 - Perfect match!” → How did this compare to the image they uploaded?

With BOTH:

- “User rushed, got sheep with ribbons, evaluation panicked about safety” → The OOTB evaluation treats image generation prompts like real costume instructions

- “40% of low scores are costume safety, not bad matching” → Need custom evaluation criteria (coming soon!)

- “Users might think low score = bad casting, but it’s often = protective evaluation” → Would benefit from custom evaluation criteria to avoid this confusion

Your turn: See the complete picture

Want to add this observability magic to your own app? Here’s how:- Install the packages

- Initialize with observability

- Configure online evaluations in dashboard

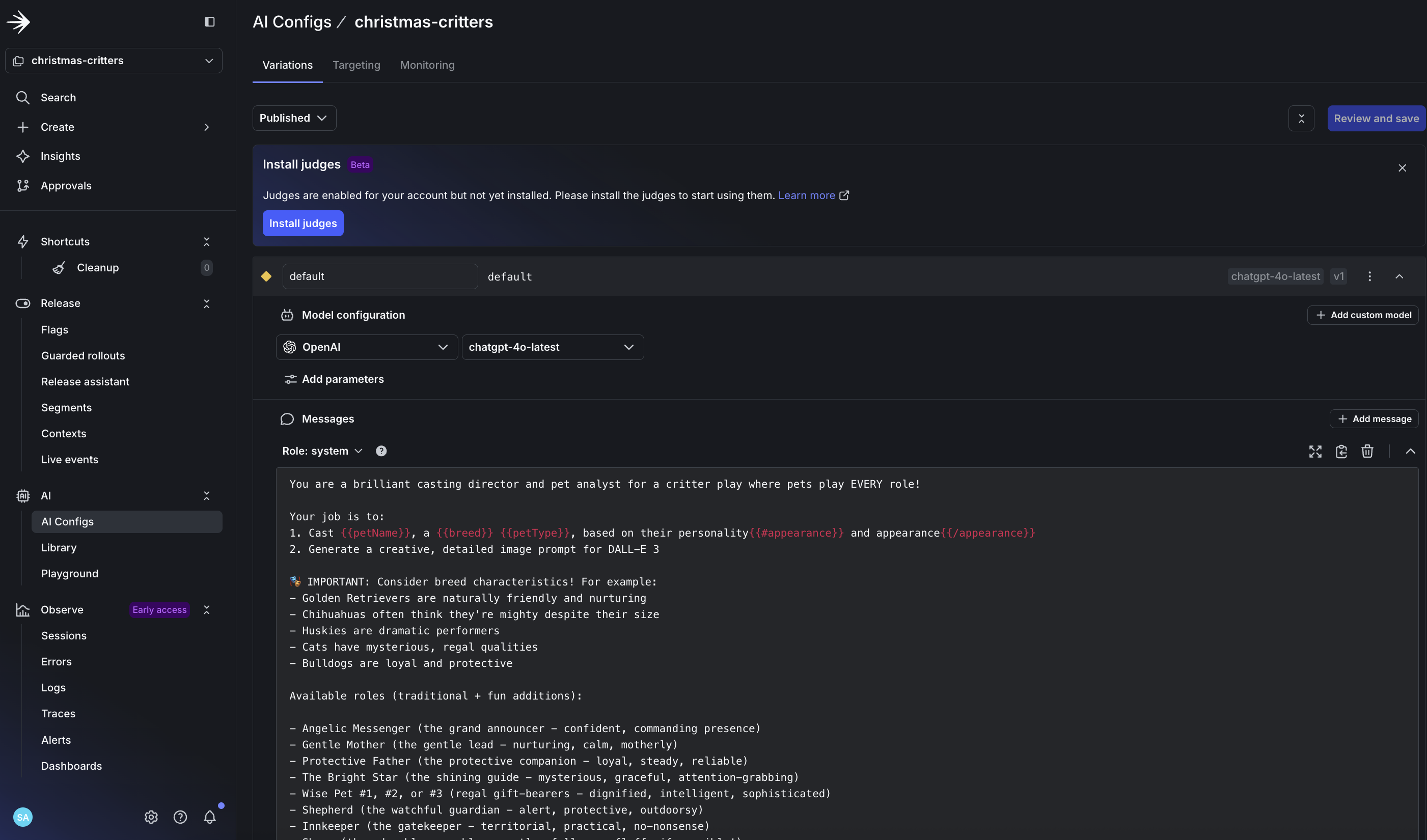

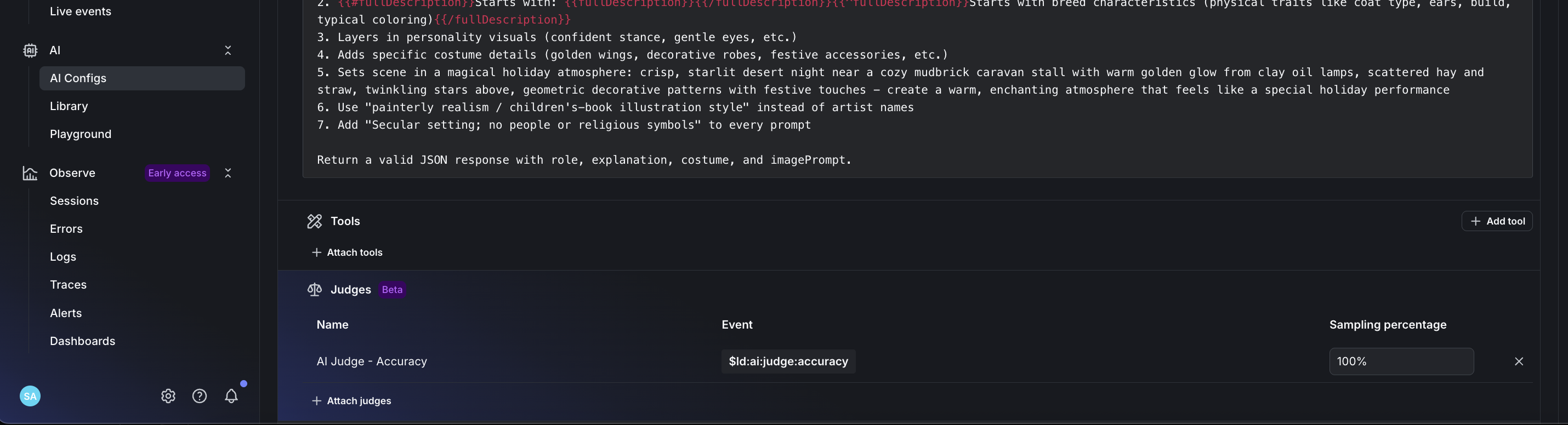

- Create your AI Config in LaunchDarkly for LLM evaluation

- Enable automatic accuracy scoring for production monitoring

- Set accuracy weight to 100% for production AI monitoring

- Monitor your AI outputs with real-time evaluation scoring

- Connect the dots

- Where users drop off

- What confuses them

- When they rage click

- How long they wait

- AI decision accuracy scores

- Why certain outputs scored low

- Pattern of good vs bad castings

- Safety concerns (even for pixels!)